TLDR: OpenAI's record $122B raise signals AI's evolution from software to industrial infrastructure, financing up to 26 gigawatts of custom chips and data centers that cost $20M per megawatt to build. As tech giants plan $610-635B in AI spending for 2026 alone, investors—including chip suppliers and cloud providers—are betting that compute access, not code, is emerging as the ultimate competitive moat.

TL;DR: OpenAI's record $122 billion funding round is easy to read as a valuation headline. The more interesting story is what that money is actually for: chips, data centers, electricity, cooling, and the physical infrastructure needed to run AI at global scale. Major tech companies are planning $610-635 billion in AI and data center spending in 2026 alone. Investors aren't just betting on a chatbot. They're betting that compute access is becoming a moat—even if the costs, bottlenecks, and execution risks are enormous.

OpenAI closed a record $122 billion funding round on March 31, 2026, landing at roughly an $852 billion post-money valuation. That's the kind of number that makes your brain briefly stop loading.

But the most interesting question isn't "How can a company be worth that?" It's "What on earth does it need that much money for?"

Follow the money, and the story shifts from finance headline to industrial buildout. The number is flashy. What's underneath it is the real plot.

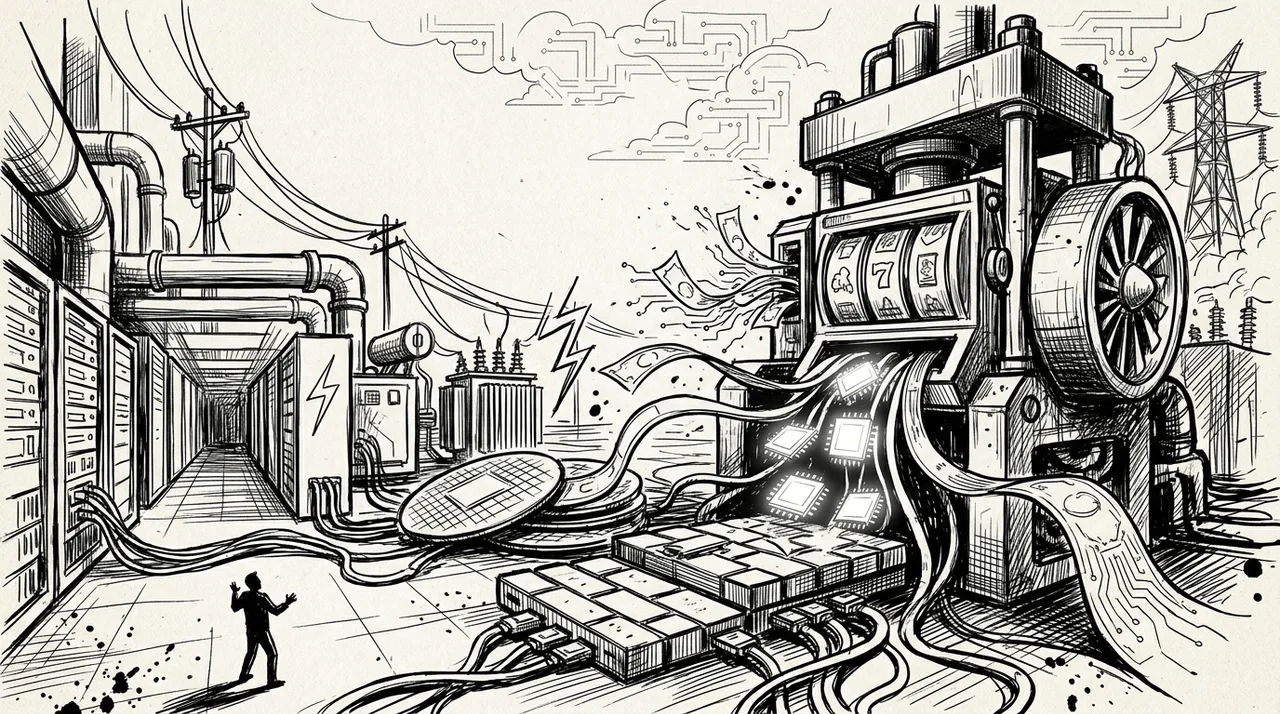

This Is an Infrastructure Story

Most coverage has zeroed in on the raise size and the valuation, which, fair enough—those numbers are objectively loud. But OpenAI and reporting around the deal point to something more concrete: the funds are aimed at AI chips, data centers, compute infrastructure, talent, and R&D.

That's a meaningful signal. Frontier AI is no longer just a software story. It's increasingly a capital-intensive industrial one, the kind that requires power plants, not just programmers.

The investor list makes this obvious once you look at it that way. The round was co-led by SoftBank and Andreessen Horowitz, with major commitments from Nvidia, Microsoft, Amazon, BlackRock, Blackstone, Fidelity, TPG, T. Rowe Price, D.E. Shaw, and MGX. Several of those names sit directly in the supply chain of chips, cloud computing, and infrastructure finance. They're not just buying equity. They're buying into a pipeline.

What $122 Billion Actually Buys

Chips. Frontier AI runs on enormous fleets of specialized accelerators, not ordinary servers. OpenAI has a letter of intent with Nvidia for at least 10 gigawatts of systems, with the first 1 gigawatt planned on Nvidia's Vera Rubin platform in the second half of 2026. That's millions of GPUs. OpenAI is also co-developing custom chips with Broadcom, pushing its total hardware commitments to an estimated 26 gigawatts across partners. When you're designing your own silicon, chip supply has stopped being a procurement question and started being a strategic one.

Data centers. All those chips need purpose-built homes. AI-optimized data centers cost $20 million or more per megawatt to construct, compared to roughly $10-12 million for standard facilities. A 50-megawatt site can exceed $1 billion before a single chip goes inside. These aren't boring server rooms. They're dense, high-heat, high-bandwidth industrial facilities requiring ultra-fast networking and aggressive cooling systems.

Power and physical capacity. This is the part that still sounds fake until you run the numbers. Stargate, the infrastructure initiative tied to OpenAI, SoftBank, Oracle, and MGX, is targeting roughly $500 billion by 2029, with planned capacity in the 7-10 gigawatt range across U.S. sites. Nearly 7 gigawatts is the rough equivalent of seven large nuclear reactors' worth of power draw.

That's not a metaphor for "a lot." It is a lot.

Why It's So Expensive to Stay On

Training frontier models is costly, but that's only part of the bill. Running them for hundreds of millions of people is expensive too—and ongoing.

Every time you type a prompt and get a response, that's inference: compute consumed in real time. OpenAI had 900 million weekly active users and $2 billion in monthly revenue as of March 2026. Reported inference costs quadrupled in 2025 alone, and adjusted gross margins fell from 40% to 33%. OpenAI is targeting roughly $600 billion in total compute spending by 2030.

These aren't cheap internet products with near-zero marginal costs. They're expensive services running on top of an increasingly utility-scale infrastructure stack.

Why Investors Are Still Writing Checks

Part of it is scale. OpenAI has user growth, revenue, and a platform footprint that looks less like an app and more like a layer of computing infrastructure. If AI becomes as foundational as cloud computing, early positions in leading platforms could be extremely valuable.

Part of it is that some investors benefit from the buildout itself, regardless of OpenAI's profitability. Chip suppliers, cloud providers, infrastructure financiers, and networking companies all profit from construction. This is as much a bet on the supply chain as on any single company.

And OpenAI isn't alone. Big Tech is collectively planning $610-635 billion in AI and data center capex in 2026, roughly triple 2024 levels. Global data center capex by top operators is nearing $750 billion. Anthropic, backed heavily by Amazon, is also scaling aggressively. This is an industry-wide race, and sitting it out looks increasingly costly.

The Part That Doesn't Come Easy

Throwing billions at infrastructure doesn't repeal physics or procurement timelines.

New AI data centers take 12 to 24 months to build, while Nvidia is now shipping major new chip generations annually. That gap creates real risk: you can break ground designing for today's hardware and open the doors to something already one generation old. Very expensive yesterday is still yesterday.

Power is another hard constraint. In many markets, available grid capacity is harder to secure than construction permits. You can have land, money, and ambition and still spend months waiting for electricity.

Even marquee projects hit friction. Oracle and OpenAI reportedly dropped plans to expand their flagship Abilene, Texas site in early 2026 after financing talks stalled and hardware needs shifted. More than 23 gigawatts of data center capacity was under construction globally as of late 2025—but "under construction" is not the same as "on schedule."

What This Round Is Really Signaling

OpenAI's $122 billion raise is less a strange startup milestone and more a marker of what AI has become: a compute and infrastructure contest as much as a software one.

The overlooked story in any future AI headline is the physical layer underneath it—chips, power, construction, networking, cooling, and the ongoing cost of serving hundreds of millions of people. The software still matters. But it now rides on top of an enormous industrial stack.

Next time an AI headline sounds like a finance story, it's worth asking a second question: what infrastructure is that number actually trying to build?

Sources

- TechCrunch, March 31, 2026: OpenAI's $122 billion raise, investor roster, and use of proceeds

- The Information, March 2026: Round size and pre-money valuation

- Constellation Research, March 2026: OpenAI revenue, weekly active users, and infrastructure direction

- CNBC and Reuters, February 2026: OpenAI's $600 billion compute spending target through 2030; inference cost growth; gross margin decline

- WIRED and Reuters, 2025: Stargate expansion plans and gigawatt-scale infrastructure across U.S. sites

- Tom's Hardware and OpenAI partnership reporting, 2025-2026: Nvidia's 10 GW systems plan; Broadcom custom chip co-development; 26 GW total hardware commitments

- Data Center Dynamics and IntuitionLabs, 2025: Stargate project structure, site details, and build costs

- Bloomberg and Reuters, March 2026: Oracle-OpenAI Texas expansion dropped; hardware refresh risk; financing friction

- AIToolDiscovery and construction industry data, 2025-2026: AI data center cost per megawatt; power and cooling constraints

- BloombergNEF, late 2025: Global data center capex and gigawatts under construction